Reading Phil’s multiple reviews of Competency-Based Education (CBE) “LMSs”, one of the implications that jumps out at me is that we see a much more rapid and coherent progression of learning platform designs if you start with a particular pedagogical approach in mind. CBE is loosely tied to family of pedagogical methods, perhaps the most important of which at the moment is mastery learning. In contrast, questions about why general LMSs aren’t “better” beg the question, “Better for what?” Since conversations of LMS design are usually divorced from conversations of learning design, we end up pretending that the foundational design assumptions in an LMS are pedagogically neutral when they are actually assumptions based on traditional lecture/test pedagogy. I don’t know what a “better” LMS looks like, but I am starting to get a sense of what an LMS that is better for CBE looks like. In some ways, the relationship between platform and pedagogy is similar to the relationship former Apple luminary Alan Kay claimed between software and hardware: “People who are really serious about software should make their own hardware.” It’s hard to separate serious digital learning design from digital learning platform design (or, for that matter, from physical classroom design). The advances in CBE platforms are a case in point.

But CBE doesn’t work well for all content and all subjects. In a series of posts starting with this one, I’m going to conduct a thought experiment of designing a learning platform—I don’t really see it as an LMS, although I’m also not allergic to that term as some are—that would be useful for conversation-based courses or conversation-based elements of courses. Because I like thought experiments that lead to actual experiments, I’m going to propose a model that could realistically be built with named (and mostly open source) software and talk a bit about implementation details like use of interoperability standards. But all of the ideas here are separable from the suggested software implementations. The primary point of the series is to address the underlying design principles.

In this first post, I’m going to try to articulate the design goals for the thought experiment.

When you ask people what’s bad about today’s LMSs, you often get either a very high-level answer—“Everything!”—or a litany of low-level answers about how archiving is a pain, the blog app is bad, the grade book is hard to use, and so on. I’m going to try to articulate some general goals for improvement that are in between those two levels. They will be general design principles. Some of them apply to any learning platform, while others apply specifically to the goal of developing a learning platform geared toward conversation-based classes.

Here are four:

1. Kill the Grade Book

One of the biggest problems with mainstream models of teaching and education is their artificiality. Students complete assignments to get grades. Often, they don’t care about the assignment, and the assignments aren’t often designed to be something that entice students to care about them. To the contrary, they are often designed to test specific knowledge or competency goals, most of which would never be practically tested in isolation in the real world. In the real world, our lives or livelihoods don’t depend solely on knowing how to solve a quadratic equation or how to support an argument with evidence. We use these pieces to accomplish more complex real-world goals that are (usually) meaningful to us. That’s the first layer of artificiality. The second layer is what happens in the grade book. Teachers make up all kinds of complex weighting systems, dropping the lowest, assigning a percentage weight to different classes of assignments, grading on curves, and so on. Faculty often spend a lot of energy first creating and refining these schemes and then using them to assign grades. And they are all made up, artificial, and often flawed. (For example, many faculty who are not in mathematically heavy disciplines make the mistake at one time or another of mixing points with percentage grades, and then spend many hours experimenting with complex fudge factors because they don’t have an intuition of how those two grading schemes interact with each other.)

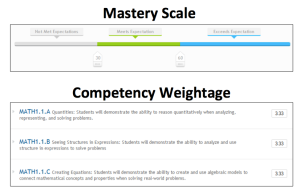

Some of this artificiality is fundamentally baked into the foundational structures of schooling and accreditation, but some of it is contingent. For example, while CBE approaches don’t, in and of themselves, do anything to get rid of the artificiality of the schooling tasks themselves (and may, in fact, exacerbate them, depending on the course design), they can simplify or eliminate a traditional grade book, particularly in mastery learning courses. With CBE in general, you have a series of binary gates: Either you did demonstrate competency or you didn’t. You can set different thresholds, and sometimes you can assess different degrees of competency. But at the end of the day, the fundamental grading unit in a CBE course is the competency, not the quiz or assignment. This simplifies grading tremendously. Rather than forcing teachers to answer questions like, “How many points should each in class quiz be, and what percentage of the total grade should the count for,” teachers instead have to answer questions like, “How much should students’ ability to describe a minor seventh chord count toward their music theory course grade?” The latter question is both a lot more straightforward and more focused on teachers’ intuitions about what it means for a student to learn what a class has to teach.

Nobody likes a grade book, so let’s see how close we can get to eliminating the need for one. In general, we want a grading system that enables teachers to make adjustments to their course evaluation system based on questions that are closely related to their expertise—i.e., what students need to know and whether they seem to know it—rather than on their skills in constructing complex weighting schemes. The mechanism by which we do so will be different for discussion-based course components than for many typical implementations of CBE, particularly machine-graded CBE, but I believe that a combination of good course design and good software design can actually help reduce both layers of grading artificiality that I mentioned above.

2. Use scale appropriately

Most of the time the word “scale” used in an educational context attaches to a monolithic, top-down model like MOOCs. It takes a simplistic view of Baumol’s Cost Disease (which is probably the wrong model of the problem to begin with) and boils down to asking, “How can we reduce the per-student costs by cramming more students into the same class?” I’m more interested in a different question: What new models can we develop that harness both the economic and the pedagogical benefits of large-scale classes without sacrificing the value of teacher-facilitated cohorts? Models like Mike Caulfield’s and Amy Collier’s distributed flip, or FemTechNet’s Distributed Open Collaborative Courses (DOCCs). There are almost certainly some gains to be made using these designs in increasing access by lowering cost. They might (or might not) be more incremental than the centralized scale-out model, but they should hopefully not come with the same trade-offs in educational quality. In fact, they will hopefully improve educational quality by harnessing global resources (including a global community of peers for both students and teachers) while still preserving the local support. And I think there’s actually a potential for some pretty significant scaling without quality loss when the model I have in mind is used in combination with a CBE mastery learning approach in a broader, problem-based learning course design. More on that later.

Another kind of scaling that interests me is scaling (or propagating) changes in pedagogical models. We know a lot about what works well in the classroom that never gets anywhere because we have few tools for educating faculty about these proven techniques and helping them to adopt them. I’m interested in creating an environment in which teachers share learning design customizations by default, and teachers who create content can see what other teachers are doing with it—and especially what students in other classes are doing with it—by default. Right now, there is a lot of value to the individual teacher of being able to close the classroom door and work unobserved by others. I would like to both lower barriers to sharing and increase the incentives to do so. The right platform can help with that, although it’s very tricky. Learning Object Repositories, for example, have largely failed to be game changers in this regard, except within a handful of programs or schools that have made major efforts to drive adoption. One problem with repositories is that they demand work on the part of the faculty while providing little in the way of rewards for sharing. If we are going to overcome the cultural inhibitions around sharing, then we have to make the barrier as low as possible and the reward as high as possible.

3. Assess authentically through authentic conversations

Circling back to the design goal of killing the grade book, what we want to be able to do is directly assess the student’s quality of participation, rather than mediate it through a complicated assignment grading and weighting scheme. Unfortunately, the minute you tell students they are getting a “class participation” grade, you immediately do massive damage to the likelihood of getting authentic conversation and completely destroy the chances that you can use the conversation as authentic assessment. People perform to the metrics. That’s especially true when the conversations are driven by prompts written by the teacher or textbook publisher. Students will have fundamentally different types of conversations if their conversations are not isolated graded assignments but rather integral steps on their way to accomplish some larger task. Problem-Based Learning (PBL) is a good example. If you have a course design in which students have to do some sort of project or respond to some sort of case study, and that project is hard and rich enough that students have to work with each other to pool their knowledge, expertise, and available time, you will begin to see students act as authentic experts in discussions centered around solving the course problem set before them.

A good example of this is ASU’s Habitable Worlds, which I have blogged about in the past and which will be featured in an episode of the aforementioned e-Literate TV series. Habitable Worlds is roughly in the pedagogical family of CBE and mastery learning. It’s also a PBL course. Students are given a randomly generated star field and are given a semester-long project to determine the likelihood that intelligent life exists in that star field. There are a number of self-paced adaptive lessons built on the Smart Sparrow platform. Students learn competencies through those lessons, but they are competencies that are necessary to complete the larger project, rather than simply a set of hoops that students need to jump through. In other words, the competency lessons are resources for the students. They also happen to be assessments, but that’s not the only reason, and hopefully not the main reason, students have to care about them anymore. The class discussions can be positioned in the same way, given the right learning design. Here’s a student featured in our e-Literate TV episode talking about that experience:

The way the course is set up, students use the discussion board for authentic science-related problem solving. In doing so, they are exhibiting competencies necessary to be a good scientist (or a good problem solver, or a supportive member of a problem-solving team). They have to know when to search for information that already exists on the discussion board, how to ask for help when they are stuck, how to facilitate a problem-solving conversation, and so on. And these are, in fact, more valuable competencies for employers, society, and the students themselves than knowing the necessary ingredients for a planet to be habitable (for example). Yet we generally ignore these skills in our grading and pretend that the knowledge quizzes tell us what we need to know, because those are easier to assess. I would like for us to refuse to settle for that anymore.

This is a great example of how learning design and learning platform design can go hand-in-hand. If the platform and learning design work together to enable students to have their discussions within a very large (possibly global) group of learners who are solving similar problems, then there are richer opportunities to evaluate students’ respective abilities to demonstrate both expertise and problem-solving skills across a wide range of social interactions. Assuming a distributed flip model (where faculty are teaching their own classes on their own campuses with their own students but also using MOOC-like content and discussions that multiple other classes are also using), if you can develop analytics that help the local teachers directly and efficiently evaluate students’ demonstrated skills in these conversations, then you can feed the output of the analytics, tweaked by faculty based on which criteria for evaluating students’ participation they think are most important, into a simplified grading system. I’ll have a fair bit to say about what this could look like in practice in a later post in this series.

4. Leverage the socially constructed nature of expertise (and therefore competence)

Why do colleges exist? Once upon a time, if you went to a local blacksmith that you hadn’t been to before, you could ask your neighbor about his experience as a customer or look at the products the blacksmith produced. If you wanted to hire somebody you didn’t know to work in your shop, you would do the same. You’d generally get a holistic evaluation with some specific examples. “Oh, he’s great. My horse has five hooves. He figured out how to make a special shoe for that fifth hoof and didn’t even charge me extra!” You might gather a few of these stories and then make your decision. One thing you would not do is make a list of the 193 competencies that a blacksmith should have and check to see whether he’s been tested against them.

For a variety of reasons, it’s not that simple to evaluate expertise anymore. Credentialing institutions have therefore become proxies for these sorts of community trust network. “I don’t know you, but you graduated from Harvard, and I believe Harvard is a good school.” There was some of that in the early days—“I don’t know you, but you apprenticed with Rolf, and I trust Rolf”—but the universities (and other guilds) took this proxy relationship to the next step by asking people to invest their trust in the institution rather than the particular teacher. The paradox is that, in order to justify their authority as reputation proxies, these institutions came under increasing pressure to produce objective sounding assessments of their students’ expertise. As we go further and further down this road, these assessments look less and less like the original trust network assessment that the credential is supposed to be a proxy for. This may be one reason why a variety of measures show employers don’t pay much attention to where prospective employees get their degrees and don’t have a high opinion of the degree to which college is preparing students for their careers. As somebody who has made hiring decisions in both big and small companies, I can tell you that I don’t remember even looking at the prospective employees’ college credentials. The first screening was based on what work they had done for whom. If the positions had been entry-level, I might have looked at their college backgrounds, but even there, I probably would have looked more at the recommendations, extra-curricular activities, and any portfolio projects. In other words, who will vouch for you, what you are passionate about, and what work you can show. At most, the college degree is a gateway requirement except in a few specific fields. You may have to have one in order to be considered for some jobs, but it doesn’t help you actually land those jobs. And there is little evidence I am aware of that increasingly fine-grained competency assessments improve the value of the credential. This isn’t to say that there is no assessment mechanism better than the old ways. Nor is it to say anything about the value of CBE for either pedagogical purposes (e.g., the way it is used the Habitable Worlds example above) or its value in increasing access to education (and educational credentials) through prior learning assessments and the ability to free the students from the tyranny of seat time requirements. It’s just to say that it’s not clear to me that the path toward exhaustive assessment of fine-grained competencies leads us anywhere useful in terms of the value of the credential itself or in fostering the deep learning that a college degree is supposed to certify. In fact, it may be harmful in those respects.

If we could muster the courage to loosen our grip on the current obsession with objective, knowledge-based certification, we might discover that the combination of digital social networks and various types of analytics hold out the promise that we can recreate something akin to the original community trust network at scale. Participants—students, in our case—could be evaluated on their expertise based on whether people with good reputations in their community (or network) think that they have demonstrated expertise. Just as they always have been. And the demonstration of that expertise will be on full display for direct evaluation because the conversation(s) in which the demonstration(s) occurred and got judged by our trusted community members are on permanent digital display. ((Hat tip to Patrick Masson, among others, for guiding me to this insight.)) The learning design creates situations in which students are motivated to build trust networks in the pursuit of solving a difficult, college-level problem. The platform helps us to externalize, discover, and analyze these local trust networks (even if we don’t know any of the participants).

* * *

Those are the four main design goals for the series. (Nothing too ambitious.) In my next post, I’ll lay out the use case that will drive the design.

[…] https://eliterate.us/blueprint-for-a-post-lms-part-1/ […]