As Phil noted in his analysisof the SJSU report, one of the main messages of the report seems to be that some of what we already know about performance and critical success factors for more traditional online courses also seem to apply to xMOOCs. But how good is the ed tech industry at taking advantage of what we already know?

Not very good, as far as I can tell.

One of the points that the report writers emphasize is that—no surprise—student effort is by far the biggest predictor of student success:

The primary conclusion from the model, in terms of importance to passing the course, is that measures of student effort eclipse all other variables examined in the study, including demographic descriptions of the students, course subject matter and student use of support services. Although support services may be important, they are overshadowed in the current models by students’ degree of effort devoted to their courses. This overall finding may indicate that accountable activity by students—problem sets for example—may be a key ingredient of student success in this environment.

We also know that many of the students in the SJSU MOOCs were at-risk students. They were traditional students who had failed the course for the first time, high school students in an economically disadvantaged neighborhood, and non-traditional students. What do we know about at-risk students? We know that they often need help, and we also know that they are not good at knowing when to get help. They aren’t good at knowing when they are not doing enough and they are also not good at knowing when they are underperforming and are in danger of failing.

We certainly see signs of the latter problem in the SJSU report:

The statistical model pointed to the critical importance of effort. In Survey 3 students indicated that they recognized the need for a sustained effort and the danger of falling behind. In fact, when asked what they would change if starting the semester over knowing what they know now, one of the top choices was to “make sure I don’t fall behind.” Almost two-thirds of survey respondents (65%) in Survey 3 pointed to this change, including 82% of matriculated students in Math 6L and 75% of matriculated students in Math 8. In Stat 95, where students were less likely to fall seriously behind because of stricter adherence to deadlines (see below), 60% of both matriculated and non-matriculated students identified “not falling behind” as a change they would make.

So students in these classes had trouble staying on top of the work, and the evidence (from the statistics class) is that at least part of the problem is an inability to self-regulate rather than pure lack of time. We also see evidence that students in these courses were not good at seeking help:

[O]ne of the top-rated changes students identified in Survey 3 was “more help with course content”. In this area, there was almost no difference between survey responses from matriculated and non-matriculated students with 80% of respondents from both groups rating “more help with content” as a “very important” or “important” change they would like to see Udacity and SJSU make.

This finding, when corroborated with input from faculty (see below) points to an area that may require additional attention. Because while students did not use the opportunity to video-conference with instructors, many students e-mailed their professor, but not with questions about content. Instead, student email communications focused, across the three courses, on questions related to course requirements, assignments and other technical or process-related issues.

Some of the problem could be attributed to students’ lack of awareness that help is available, but you can’t say that for students that actually asked for help and then failed to ask for content help. There is a failure of help-seeking behavior.

As I have written about here before, this is exactly the problem that Purdue’s (and now Elucian’s) Course Signals retention early warning system was designed to address. The whole thing is designed to prod students who are falling behind to get help. Basically, if students are falling behind (or failing), they get increasingly insistent messages pushing them to get help. At its heart, it is really that simple. And the results that Purdue has gotten are impressive. For example, they have been able to drive much higher utilization of their biology resource center:

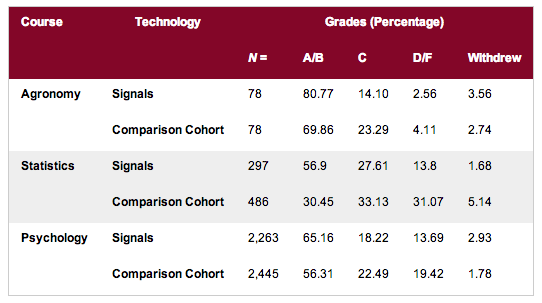

Does an increase in help-seeking behavior yield greater student success? The answer is a resounding yes:

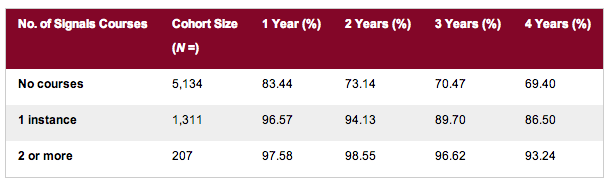

They are seeing solid double-digit improvements in most cases. What is most impressive to me, though, is that these results persist over time, even after the students stop using the system:

Students who took just one Signals-based course are 17% more likely to still be in school in their fourth year of college than those who didn’t. Students who took two or more Signals-based courses are a whopping 24% more likely to be in school in their fourth year than those who didn’t have any. In other words, the technology actually teaches the students skills that are vital to their success. Once they learn those skills, they no longer need the tool in order to succeed. So there is no mystery about what at-risk students need or how technology can help provide it to them. Purdue’s first presentation on Course Signals was in 2006.

This is why I expressed disappointment in my posts about the analytics products from Desire2Learn and Blackboard when, despite obviously following in the footsteps of Course Signals, both products focused on dashboards for teachers. We know that direct and timely intervention is what at-risk students need. We know that this intervention can have substantial and lasting effects. The Purdue model works. I’m sure that either company could sell lots of product if they could credibly claim that they could increase their customers’ four-year retention rates by double digits. But in order to do that, they need to follow Purdue’s example and support direct feedback to the students. This approach, by the way, fits rather well with the MOOC model, where direct instructor intervention is far less likely (or even impossible) on a per-student basis. But Purdue has shown that it also works quite well in a traditional course model and that faculty can get direct benefit and insight from this approach, even if they are not the primary audience.

Can we expect better from the MOOC providers? The early indications are not good. The company and the university, perhaps egged on by the Gates Foundation, rushed courses out for at-risk students with a clearly inadequate focus on getting students to ask for and find the support that they need despite the fact that it is a huge known risk. Anybody with any experience teaching these populations would cite this challenge as a critical success factor—indeed, as the critical success factor. “Fail fast” may be a great mantra for a software startup, but it’s not such a good approach to teaching those students who need our help the most. (Mike Caulfield has a very timely and interesting post on just this subject.) “Measure twice and cut once” might be a better idea. Or even better than that is Steve Jobs’ favorite quote by Igor Stravinsky: “Good artists copy; great artists steal.” Of course, in order to steal like great artists do, you have to start by recognizing that you yourself are not inventing art.

As someone who along with Hanan Ayad designed Desire2Learn’s Student Success System (S3) I would like to respond. (Note: I am no longer at Desire2Learn and not their spokesman. I wanted to clarify though our design assumptions when I was there and as I saw it.)

S3 was *not* designed as a dashboard for teachers, either exclusively or primarily. From the very beginning we had three roles in mind: instructor, advisor, and student. In the K-12 context we also designed for a fourth role, the parent.

For the first version we had a put a stake in the ground and decided to implement the instructor role first. It is important to observe the distinction between *design* and *implementation*. The design and architecture of S3 supports multiple roles and no particular role is privileged over any other role. Looking at it from the outside, of course, there is no way you could know whether privileging the teacher was in the design or in the implementation.

In a separate post I will elaborate a bit on why someone might implement the teacher role first in such a system. I will also comment on the “Purdue model” using the design/implementation lens.

I do want to take this opportunity to comment on a noxious charge that is sometimes leveled against LMS providers. It’s not in your post and you are not guilty of it, but I have seen it made occasionally by some of your readers. The charge goes something like this: “LMS providers, including Desire2Learn, build their systems ‘first and foremost’ for school administrators and IT people, with ‘instructors a distant second’, and ‘students far far far behind.’

The charge is utter nonsense but I hear it quite often. During my time at Desire2Learn no one that I came across thought this away, not even remotely. Degree Compass is student-centric; Desire2Learn’s latest acquisition Knowillage is student-centric; Binder is student-centric. I could go on…

The charge is decisively refuted by Desire2Learn’s substantial investments in addressing Accessibility. School administrators (or instructors for that matter) have not been batting down the door exactly calling for improvements in Accessibility. That charge at Desire2Learn has been led by a passionate team in UX and supported every step of the way by John Baker, the CEO. Desire2Learn has continued to make these improvements not because it has been kow-towing to administrators, but because it was the right thing to do to support students who have traditionally been neglected and overlooked.

If that’s not an example of student-centric I don’t know what is.

I couldn’t agree more with the sentiment that educational technology systems need to be more student-centric and you are providing a valuable service to the community to keep shining the spotlight. It’s very much needed. But to quote my favorite educational technology philosopher on the tension between design and implementation, “You can’t always get what you want, But if you try sometimes…”

Thanks for the detailed and honest comments, Al.

As you point out, I haven’t been one to criticize LMS providers heavily for being insufficiently “student-centric”–whatever that means–but since you’ve brought it up, I will make a couple of observations. First of all, I don’t think accessibility is a particularly good example for the case that you want to make. All of these vendors are very aware of the accessibility lawsuits of the last few years, and all of them know that getting fingered in a lawsuit could be very, very bad for business. That said, I believe that the vendors are, by and large, about as “student-centric” as the average of their customer base, which is to say that they genuinely hold an ideal that they often fail to live up to for lots of both practical and emotional/political reasons. Further, unlike the colleges and universities, the vendors can legitimately argue that they are being paid to help the people who help the students. After all, students aren’t the ones who select the LMS. If colleges and universities were to start selecting their LMS vendors on the basis of how “student-centric” they are, then LMSs would become more student-centric.

On the subject of the early warning system design, you make a fair point about the difference between design and implementation. I’m very interested in your promised follow-up post about why you would start with the faculty view. I get that D2L and Blackboard both are aware of the student-facing portion of Course Signals, and that both companies legitimately plan to implement those features eventually. What I’m interested in is the rationale for prioritization. And my interest is driven by concerns about effectiveness rather than any ideal of student-centricity. We have very good data regarding the effectiveness of Purdue’s model. I’m not aware of any data we have regarding teacher dashboards that is remotely close to being as compelling as Purdue’s.

Now, sometimes you build a product in a particular order for the same reason that you have to build the first floor of a building before you build the second floor, even if you know that the most important rooms are on the second floor. But if that’s the argument for building teacher dashboards first, then I’d like to hear it articulated in some detail. Because as far as I’m concerned, the difference in value propositions between the two feature sets is pretty dramatic.

As a twenty-five year, F2F instructor, I’ve been somewhat cynical about the claims made for on line education, not that in the sense that it didn’t work. I tutor a lot on line and think it can work just fine. I just never thought it really had attributes that made it better than being in a classroom with a real instructor.

But after reading this post and seeing those statistics, I think I’m moving over to the believer side. As classes get bigger and bigger, even in a F2F classroom, there is no way an instructor could keep abreast of ALL students who were falling by the wayside in terms of course content, so having technology that can do it for you is a huge step forward and really does, in this instance at least, put online teaching a big step ahead of the brick and mortal classroom experience.

In another thread, Laura Gibbs mentioned knowing much more as an instructor in an online course than she did in a regular classroom, and I thought, I don’t really get that. But now I do. This is just a terrific use of educational technology.

Based on conversations that I’ve had with the online education departments of many schools, it is clear that most colleges consider the instructor to be responsible for retention. As a creator and seller of student retention analytics, I have found that most schools have no centralized staff that can pro-actively reach out to students — even if they had the perfect predictor of who is most at risk.

While I can’t dispute the Purdue statistics above, the behavior of teenage biology students at a major four-year university is going to be markedly different than a 30-year-old single parent taking a medical billing course online. So I’m not convinced that the Purdue figures are universal. While students may not have a “dashboard” in the LMS, they certainly have access to grades and missing assignments. Schools can find ways to send “increasingly insistent messages pushing [students] to get help” but I’m a bit baffled as to why schools don’t hire a couple of retention specialists to identify the at-risk students and make those calls. Given the cost of attrition, saving a small number of students should more than repay the effort.

Banks call their customers when loans aren’t being paid. Perhaps schools need to take a page from that book.

This is the promised follow-up post on the design and implementation of Early Warning Systems. Michael posed the question: “Given the success of the ‘Purdue model’ why are more educational technology vendors not following it?”. Also, Michael states that “we have very good data regarding the effectiveness of Purdue’s model.” Michael raises an important question and I appreciate that the e-Literate blog is providing a forum for the educational technology community to discuss best practices in learning analytics.

My post will be in two parts. In this post I want to examine what we mean precisely by the “The Purdue model”. In the next post I will discuss some design and implementation considerations that went into Desire2Learn’s Student Success System. (Note: I am no longer at Desire2Learn and not a company spokesman. Hanan Ayad and I designed the Student Success System when I was at Desire2Learn.)

Let’s consider then the “Purdue model” and Course Signals. In a nutshell here’s how Course Signals works: A statistical model generates a risk signal (green, yellow, red) for each student in a course. The signal can be generated any number of times as the course progresses. The course signal can then be displayed to students, instructors, or both. (Note: Ellucian purchased the rights to Course Signals and might have made modifications. But my understanding is that the basic design still holds. I will leave it to Ellucian and others to clarify.)

The elegance and virtue of Course Signals is its simplicity. John Campbell, Kim Arnold, and others who worked on the project are true pioneers for originating Course Signals and more importantly sparking the learning analytics revolution. Course Signals was the *first* substantive demonstration that learning analytics can have a major impact on student learning outcomes and retention.

But Course Signals has important limitations and these have to be taken into account if we are to generalize the lessons and apply it to other institutions. The limitations have to do primarily with the underlying predictive model, the interpretability of the signal, and accommodating each institution’s unique workflow requirements around intervention. I will address these limitations in more detail in my next post.

Let’s return now and ask what precisely is the “Purdue model”? As I noted above the risk signal can be displayed to students, to instructors, or to both. Purdue, therefore, had to make an important implementation decision. Do we display the signal only to students? Do we display it only to instructors? Or, do we display it to both? I take it from Michael’s discussion that he believes the “Purdue model” is one where the signal is directly and primarily, if not exclusively, made available to students. Otherwise, his criticism of educational technology vendors makes no sense. But this is not how Course Signals was implemented at Purdue. Therefore, what Michael describes as the the “Purdue model” is not the Purdue model at all. At Purdue the signal was made available to both instructors and students. It might be that at Purdue initially the signal was made available only to faculty and it was left to their discretion whether and how they would contact students. I am not sure about this though.

If one reads the literature on Course Signals it’s apparent that instructors had a pivotal role from the very beginning. In fact, here is how Kim Arnold and Matt Pistilli, in one of their papers on Course Signals, describe Course Signals: “Course Signals (CS) is a student success system that allows *faculty* (emphasis mine) to provide meaningful feedback to student based on predictive models.” (Course Signals at Purdue: LAK’12, 29 April – 2 May 2012, Vancouver, BC, Canada. Copyright 2012 ACM 978-1-4503-1111-3/12/04) In another paper Pistilli and Arnold report that “Beyond the performance and behavior data, surveys, focus groups, and interviews with students produced a resoundingly favorable perception of Signals and their instructors’ effort. Students got a true impression of *direct* interaction with their instructor — they no longer felt like “just a number in a huge lecture class,” and they shared surprise with the fact that their instructor “really cared about how they did.” (Purdue Signals. About Campus. July-August 2010). In other words, students just didn’t see the signal but received communication from their instructor.

I was keen to know and I remember posing the following question to Kim Arnold during a discussion we had at a conference. I asked, “in your experience with Course Signals what is the key feature that you believe led to its success.” I remember her answering: “The individual student getting the message that we at Purdue really *care* (emphasis mine) about you.” Kim’s answer has stuck with me because it goes against the grain of a line of thinking that we see sometimes from vendors. I call this line of thinking technology determinism: just design the right technology and the implementation will take care of itself. I don’t believe in technology determinism.

I have known Michael for many years and I know he doesn’t believe in technology determinism. Not at all. (Michael was a principal designer for a very successful Executive Education course in Finance when we overlapped at MIT.) However, in some of his writings about Course Signals Michael writes like a technology determinist: “In other words, the technology actually teaches the students skills that are vital to their success. Once they learn those skills, they no longer need the tool in order to succeed. So there is no mystery about what at-risk students need or how technology can help provide it to them.” Does a simple signal (green, yellow, red) by itself teach students skills vital to their success? The reason why Course Signals worked at Purdue, in my opinion, is because the technology was defined, implemented and then refined as part of an academic governance where faculty where integrally involved. And all my experience through the years tells me that if instructor don’t understand and support an academic technology, it will be sure to fail.

I interpret the Purdue model, therefore, very differently than Michael. The reason why Course Signals succeeded at Purdue was a combination of brilliant technology design and thoughtful implementation. The implementation fit the workflow and culture at Purdue. The Purdue team also continued to refine the model, not just the technology model, to fit the student *and* faculty experience. To adapt a phrase from Kant, good design without proper implementation is empty and proper implementation without good design is blind. In my next post I will discuss the design and implementation assumptions that went into Desire2Learn’s Student Success System.