I’ve been super-busy with a consulting gig over the last few weeks and have fallen off the wagon with my blogging. This is one of the many reasons that I am grateful to have Phil as my prolific yet profound co-publisher and that we have attracted a group of terrific featured bloggers.

Anyway, I thought I would get back to business with a long-overdue post about D2L’s learning analytics product, called “Insights.” There are several pieces to the product, but I’m going to focus on the component that they call the Student Success System. I have blogged from time to time on Purdue’s Course Signals project, (now commercialized in an offering from Elucian), as having set the bar for student retention analytics. More recently, I wrote about Blackboard’s Retention Center, which is clearly following in Purdue’s footsteps. My impression of Retention Center is that it is a reasonable Version 1 product that captures some but not all of the value of Course Signals.

D2L’s Student Success System also follows in Purdue’s footsteps. But rather than simply playing catch-up, I would call it an incremental but meaningful improvement over Course Signals in most aspects. From what I can tell based on initial conversations with D2L about the product details, this now appears to be the system to beat. (I reserve the right to change my opinion based on implementation experiences from clients, which are particularly important for this product.)

Let’s start by reviewing the Purdue model:

- Purdue discovered that student retention and completion could be strongly predicted by just a few generic indicators within the LMS, such as recency of login, participation in class discussions, assignments turned in on time, and overall grades.

- The accuracy of an early warning predictors could be improved by taking into account a few longitudinal indicators from the SIS such as GPA and entrance exam scores.

- Students who are at risk generally aren’t good at knowing when they are in trouble and should seek help.

- By providing students with an easy-to-read and automated indicator telling them that they are in trouble and should seek help, based on the data elements described above, then at-risk students tend to learn help-seeking behaviors and move themselves out of the at-risk category.

Both Blackboard and D2L have focused mainly on the first two bullet points, while designing their products to be more teacher-focused and broader in purpose than Course Signals. This is a perfectly sensible product design trade-off to make, although it does have some consequences that I will get to later in the post.

There are a few areas where D2L’s product stands out. First, they appear to have put quite a bit of thought and research into their algorithms and even publishing an academic paper on their work. (Paywall; sorry.) One of the tricky problems with this sort of an analytics product is the balance between predictive power and generalizability. Faculty and students use the LMS very differently from course to course. On the one hand, this suggests that the data indicators of an underperforming student in a comparative literature class might be quite different from those in a physics class, or even from one teacher to another within the same subject. On the other hand, it takes time, expertise, and a lot of data to fine-tune a statistical model and make sure that it has any real predictive power. Trying develop predictive capabilities for a 30-person course based on a semester or two of data isn’t going to work.

This is genuinely hard stuff. Blackboard’s strategy seems to have been to stick to the least-common denominators where we know there is some predictive power across a wide variety of course contexts (particularly if you compare a student’s performance on that indicator versus the class average). D2L, on the other hand, has looked across a broader variety of indicators and strung them together into several clusters of concerns—namely, Attendance, Preparation, Participation, and Social Connectedness. This is not blind data mining; these category names make it clear that D2L is coming to the data with relatively specific sets of hypotheses about what sorts of data would be predictors of student success. But they are precisely sets of hypotheses. If I understand their approach correctly, D2L is testing related hypotheses against each other to derive an aggregate predictor for each of these areas. I am not in a position to evaluate how much of a practical difference this approach makes versus Bb’s simpler one in terms of accuracy, but it is certainly a more sophisticated approach. Likewise, D2L’s product can take inputs from the SIS while, as far as I can tell, Bb’s retention product cannot. (Blackboard’s full Analytics product does take SIS data, but there does not appear to be integration with the Retention Center feature of Learn in the current release.)

Another innovative aspect about D2L’s offering is the balance between product and service. One advantage of the sophisticated modeling is that it can be used to create more specific models—maybe not that 30-person comp lit course, but quite possibly that survey biology course that has 1,500 students a semester across multiple sections. For a critical bottleneck course, having a more accurate predictive model that helps you actually get more students successfully through the course could be a big deal. But to do that, you need both tools that the school can use to develop the model and training and support to help them learn how to do it. D2L is offering these. I am very interested in learning more about the practical ability for schools to put them to use. If this works well, it could be a big deal.

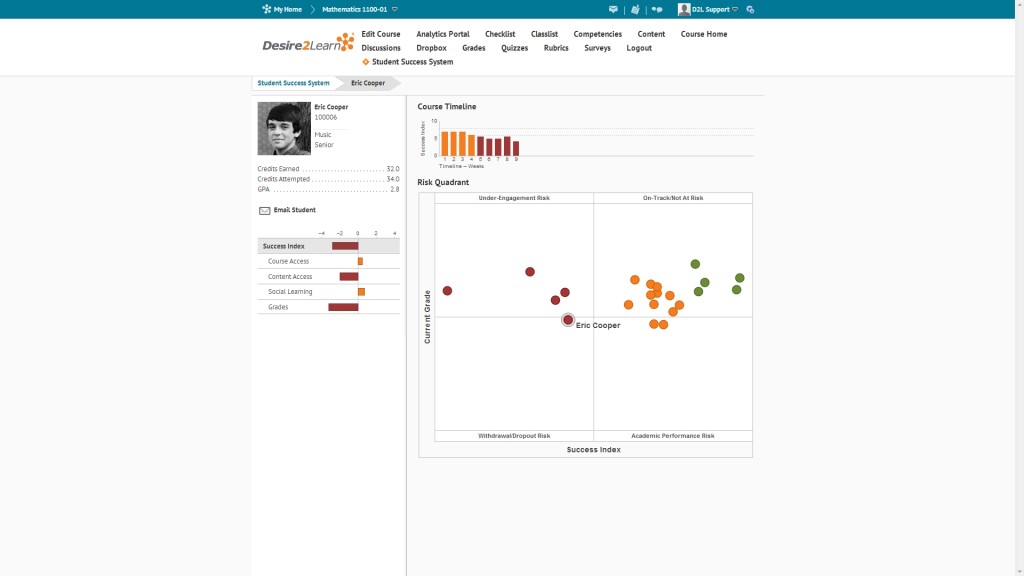

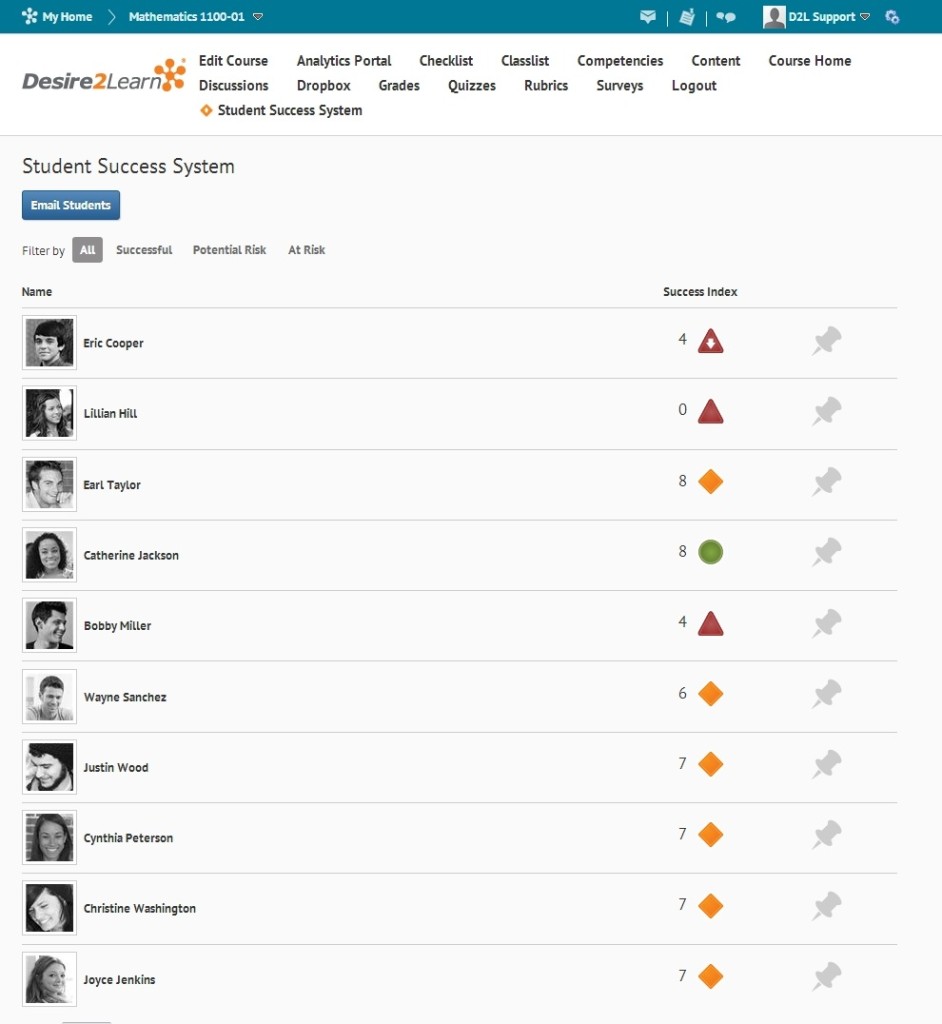

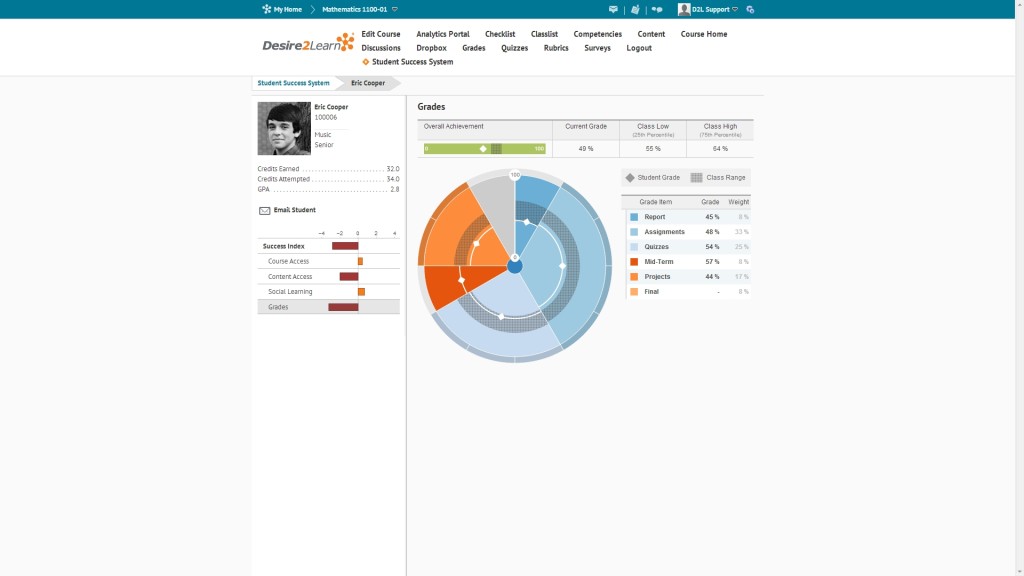

Finally, D2L has put some work into the visualizations to help teachers make sense of the data that they are getting. They provide considerably more information than the red/amber/green lights that Course Signals provides, although at some cost to readability at a glance:

The primary focus here is on giving teachers more actionable information on how their students are doing.

The only piece that’s really missing relative to Purdue is the tested student interventions. Purdue’s product is designed specifically to teach students some meta-cognitive skills. While the raw ingredients are there for D2L’s product to do the same, they do not yet have the refined and tested student-facing dashboards and call-to-action messages that Course Signals does.

So, overall, this looks like a very nice product that adds to the state of the art. But the devil is in the (implementation) details. I’m going to try to talk to some D2L clients who are actually using this product next week when Phil and I are at D2L FUSION.

So true about how different faculty use the LMS differently. Retention and student progress have always been big concerns of mine and from when I started teaching online I came up with a low-tech PEDAGOGICAL solution to this problem (since obviously I wasn’t going to get substantial help from Blackboard back in 2002 when I started teaching online). I designed my course grading system as a points-intensive system so that students ALWAYS know exactly how they are doing.

Everything they do in the class has points attached – quizzes have points, and students declare their other work via declarations (call them rubrics if you want) which are also configured as quizzes (where “true” is the right answer, and the points go straight into the Gradebook). So, a typical week has 2 reading quizzes, 3 blogging declarations, plus 2 other participation declarations – along with extra credit declarations: http://onlinecourselady.pbworks.com/w/page/43999008/mythfolklore03 (I record one item per week per student related to their semester-long project).

Students always know where they stand week by week: http://onlinecourselady.pbworks.com/w/page/44034097/gradingchart

I design my interventions (which are extensive and varied) based on tracking total points and also tracking points per type of assignment (i.e. students with consistently low scores on reading quizzes get extra tips and encouragement from me about how to tackle the reading assignments).

D2L doesn’t help me with any of that (their Gradebook does not work like a real spreadsheet should) – but I just create my own spreadsheet with GoogleDocs, run the formulas, and proceed. Students, meanwhile, have a very clear sense of where they stand at any given moment.

So, that’s my low-tech solution and it works great – although it is not the way most people teach, not even online. I had to come up with something, because if I waited on the LMS to provide my intervention data, I would have been lost all these years – not to mention that the odds of my school ponying up even more money for D2L products are low.

I would be interested to read what you learn from FUSION. This specific product version/rename is very new and I don’t think it has been deployed in production to many institutions. It also requires a client to be on D2L 10.2. One of the drawbacks in these systems is if faculty and students do not interact with the LMS, on a high level, then your data will likely be poor. Many institutions don’t have 100% of a course in an LMS. If student work is only 20-30% in the LMS then that short fall has to be made up some way to develop a “full understanding.” That may lead to faculty manually entering student scores and/or participation to have data to predict from. Or so it would seem.

Bill, obviously, if a teacher only uses the LMS to post a syllabus, then analytics are not going to add a whole lote. But Purdue only used Course Signals on F2F classes during their research and they were able to get good predictive data across a range of classes. I wonder if the value of the analytics incentivizes faculty to make more use of the LMS to some degree.

Laura, you continue to amaze me. If we could bottle that energy you put out, we probably wouldn’t have to deal with all this hydrofracking nonsense.

Hi Mike~

It would be cool if you had some share icons on your posts. I have to post links when I talk about your blog content and it would be great to have a “Share this” option!

Cheers,

Pamela

We have them, Pamela. They are just hard to find, unfortunately. At the bottom of the post text, right about the “About the Author” box, there are a series of sharing icons. I’ll look into making them more obvious.

Ha ha, Michael, I love the idea of being better than fracking. But the energy metaphor is just the opposite of what you imagine: I set up my classes this way because I am very guarded in my use of time (to put it more simply: I am lazy!), so I am trying to make the most of my time and the most of my students’ time. To be honest, probably the single most useful thing my students learn from my classes would be better time management skills since I make that explicitly a topic in the class. I use the “this may be your first fully online course…” approach as my excuse to focus in on time management as an essential feature of the class (even though time management could and should be a part of every single college course I suspect).

The problem is that Michael still believes there is “a” Laura Gibbs instead of a small cadre of online teaching experts mutually blogging / G+ing / commenting under the Laura Gibbs name. As if one person could give that much feedback to students as well as have deep interactions online in social networks and blogs.

Thought I would share this fine blog post re: analytics (BB perspective this time) from JD Ferries-Rowe – I love the “isn’t worth the squeeze” metaphor – http://geekreflection.blogspot.com/2013/07/making-sure-juice-is-worth-squeeze.html

FWIW, a small group at the Univ of Wisconsin-Madison (along with UW-Platteville and UW Colleges) is piloting D2L’s Analytics, er Insights, system through June 2014. You can read updates on the project here: http://www.cio.wisc.edu/learning-analytics-pilot.aspx

In general, my colleagues have told me that the infrastructure has a long ways to go (complicated by the fact our institution has an enormous amount of data from D2L’s LE). I’ve also heard that initial pilot faculty were reluctant to make any intervention decisions based on the analytics tools. Another group of pilot faculty is being formed for the upcoming academic year.

It seems Purdue’s Course Signals program has a critical element in its approach (as does Laura) in contrast to D2L and Blackboard by providing the students with data and information [automated indicator in Course Signals] on his or her progress and performance. D2L and Blackboard are focusing their efforts on enhancing the instructional aspect by giving tools to the teacher, rather than giving responsibility to the student for his or her own learning. I realize the instructor has expertise and skills to support development, however the student needs to be part of the system.

Debbie, what you say is so true and it applies not just to the analytics aspect of Desire2Learn but the software as a whole. It is built first and foremost for school administrators and IT staff (they, after all, exert most of the influence over the purchase of D2L at any given school), with instructors a distant second, and students far far far behind.

So, not surprisingly, the best D2L statistics are those available to our D2L admin (and even those are very poor; usually when I ask her if we have statistics about some aspect of D2L – such as the rates at which courses actually use a specific feature – she replies that the data is not available even to her), and the statistics about my own course available to me are extremely limited… and all students can see is the not-very-well-designed Gradebook.

Laura, so frustrating isn’t it? The focus by the LMS companies is backwards – get to the student first then work up to the administrators.

Purdue is on the ball as an institution. I recently participated in Sloan Academy’s conference on blended learning, and Purdue has an impressive program to support the institutions efforts to convert courses to an active learning format and enhances instructors teaching and reduces class seat-time!