I have long argued that the development of technical interoperability standards for education are absolutely critical for enabling innovation and personalized learning environments. Note that I usually avoid those sorts of buzzwords—“innovation” and “personalized learning”—so when I use them here, I really mean them. If there are two fundamental lessons we have learned in the last several decades of educational technology development, they are these:

- Building monolithic learning environments generally results in building impoverished learning environments. Innovation and personalization happen at the edges of the system.

- There are tensions between enabling innovation at the edges and creating a holistic view of student learning and a usable learning environment. Integration does matter.

To these two education-specific principles, I would add a general principle about software:

- All software eventually grows old and dies. If you can’t get your data out easily, then everything you have done in the software will die with it (and quite possibly kill you in the process).

Together, these lessons make the case for strong interoperability standards. But arriving at those standards often feels like what Max Weber referred to as “the strong and slow boring of hard boards.” It is painful, frustratingly slow, and often lacking a feeling of accomplishment. It’s easy to give up on the process.

Having recently returned from the IMS Learning Impact Leadership Institute, I must say that the feeling was different this time. Some of this is undoubtedly because I no longer serve on any technical standards committees, so I am free to look at the big picture without getting caught up in the horrifying spectacle of the sausage making (to mix Germanic political metaphors). But it’s also because the IMS is just knocking the cover off the ball in terms of its current and near-term prospective impact. This is not your father’s standards body.

Community Growth

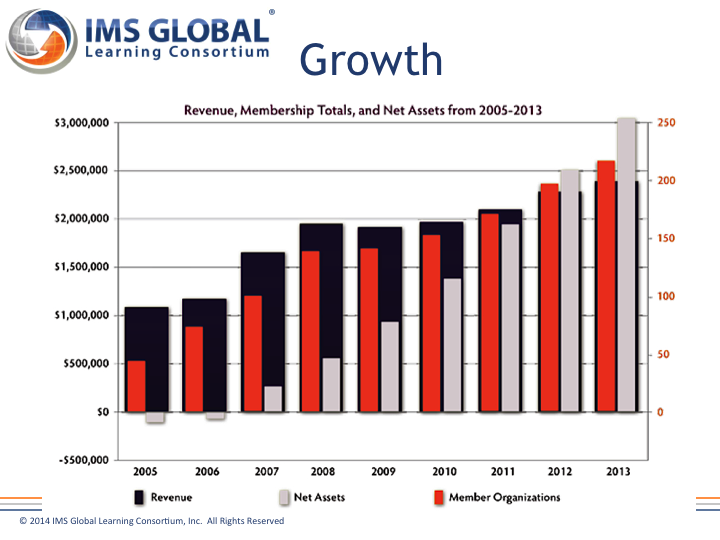

The first indicator that things are different at the IMS these days is the health of the community. When I first got involved with the organization eight years ago, it was in the early stages of recovery from a near-death experience. The IMS was dying because it had become irrelevant. It just wasn’t doing work that mattered. So people stopped coming and organizations stopped paying dues. Then Rob Abel took over and things started turning around.

Membership has quadrupled. Interestingly, there was also a very strong K12 contingent at the meeting this year, which is new. This trend is accelerating. According to Rob, the IMS has averaged adding about 20 new members a year for the last eight years but has added 25 so far in 2014.

Implementations of IMS standards is also way up:

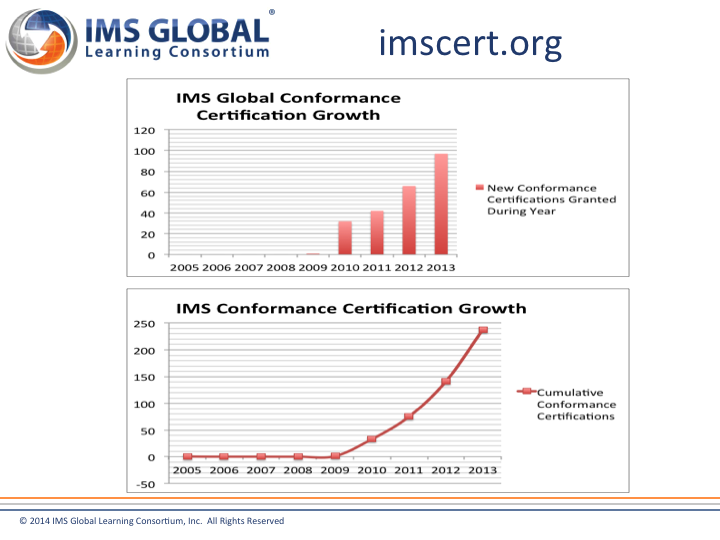

Note that conformance certifications is a new thing for IMS. One of the key changes in the organization was an effort to make sure that the specifications led to true interoperability, rather than kinda-sorta-theoretical interoperability. Close to 250 systems have now been certified as conforming to at least one IMS specification. (Note that there are also a number of systems that conform but have not yet applied for certification. So this number is not comprehensive.) And here again, the trend continues to accelerate. According to Rob, the IMS averaged two new conformance certifications a week in 2013 and is averaging four new certifications a week so far in 2014.

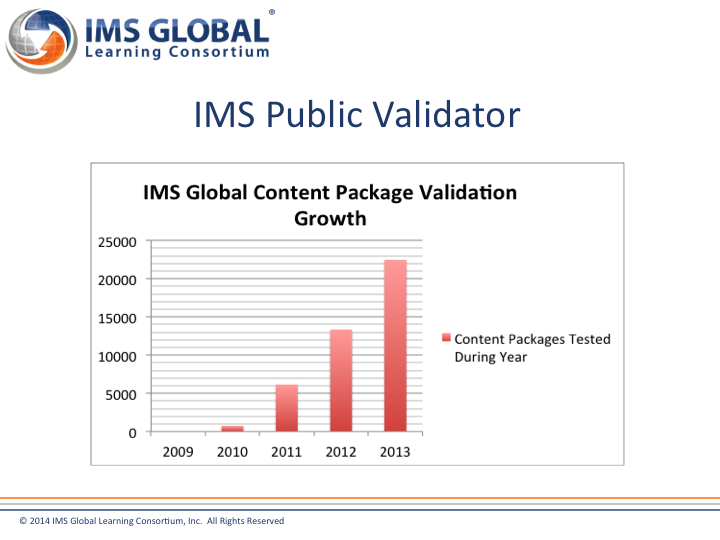

Keep in mind that these numbers are for systems. A lot of the things (for lack of a better word) that conform to IMS specifications are not systems but bundles of content. Here the numbers are also impressive:

So a lot more people are participating in IMS and a lot more products are conforming to IMS specification profiles.

Impact

One of the effects of all of this is that LMS switching has gotten a lot easier. I have noticed a significant decline in campus anxiety about moving from one LMS to another over the past few years. There are probably a number of reasons for this, but one is undoubtedly that switching has gotten significantly easier due to IMS interoperability specifications. For content, all popular LMSs in the US higher education market import Common Cartridge packages, and several of them export to Common Cartridge. (By the way, I will say it again: If you are in the process of selecting a new LMS, you should make export to Common Cartridge a buying criterion.) Hooking up the LMS to the administrative systems so that class information can be populated into the LMS and final grades can flow back to the SIS has gotten much easier thanks to the LIS specification. And third-party (including home-grown) tools that work in one LMS usually work in another without extra programming, thanks to the LTI standard.

But I think the IMS is still just warming up. LTI is leading the way for the next wave of progress. Under the stewardship of Chuck Severence, LTI now is supported by 25 learning platforms. edX and Coursera both recently announced that they support LTI, for example. It has become the undisputed standard for integrating learning tools into platforms. This means that new learning tool developers have a straightforward path to integrating with 25 learning platforms simply by supporting LTI. My guess is that a good portion of those 4 new conformance certifications a week are LTI certifications. I see signs that LTI is facilitating a proliferation of new learning tools.

There is a lot of new work happening at the IMS now, but I want to highlight two specifications in development that I think will take things to the next level. The first is Caliper. I have waxed poetic about this specification in a previous post. In my opinion, the IMS is under-representing its value by billing it as an analytics specification. It is really a learning data interoperability specification. If you want loosely coupled learning tools to be able to exchange relevant data with each other so that they can work in concert, Caliper will enable that. It as close to a Holy Grail as I can think of in terms of resolving the tension that I called out at the top of the post.

The second one is the Community App Sharing Architecture (CASA). Think of it as kind of a peer-to-peer replacement for an app store, allowing the decentralized sharing of learning apps. As the UCLA Education and Collaborative Technology Group (ECTG) puts it,

The World Wide Web is a vast, mildly curated repository of information. While search engines fairly accurately filter the Internet based on content, they are less effective at filtering based on functionality. For example, they lack options to identify mobile-capable sites, sites that provide certain interoperability mechanisms, or sites related to certain industries or with certain content rating levels. There is a space where such a model already exists: the “app stores” that pervade the native mobile app landscape. In addition to the app itself, these hubs have deep awareness of application metadata, such as mobile and/or tablet support. Another deficit of search engines is their inability to allow organization-based configuration, defining a worldview with trust relationships, filters and transformations to curate the results they present to end users. Native app stores use a star (hub-and-spoke) topology with a central hub for publishing, which lacks this fine-grain customizability, but an alternative peer-to-peer topology, as is used for autonomous systems across the Internet, restores this freedom.

CASA should facilitate the further proliferation of learning apps by making them more easily findable and sharable, drawing on affinity networks (e.g., English composition teachers or R1 universities). Caliper will enable these tools to talk to each other and create an ensemble learning environment without having to rely on vendor-specific infrastructure. And LTI will enable them to plug into a portal-like unifying environment if and when that is desirable.

Who says technical standards aren’t exciting?

[…] Many thanks to Michael Feldstein of the e-Literate Blog for the insightful post on IMS progress entitled The IMS Is More Important Than You Think It Is. […]