All the same We take our chances

Laughed at by Time, Tricked by Circumstances

Plus ca change, Plus c’est la meme chose

The more that things change, The more they stay the same– Rush, Circumstances

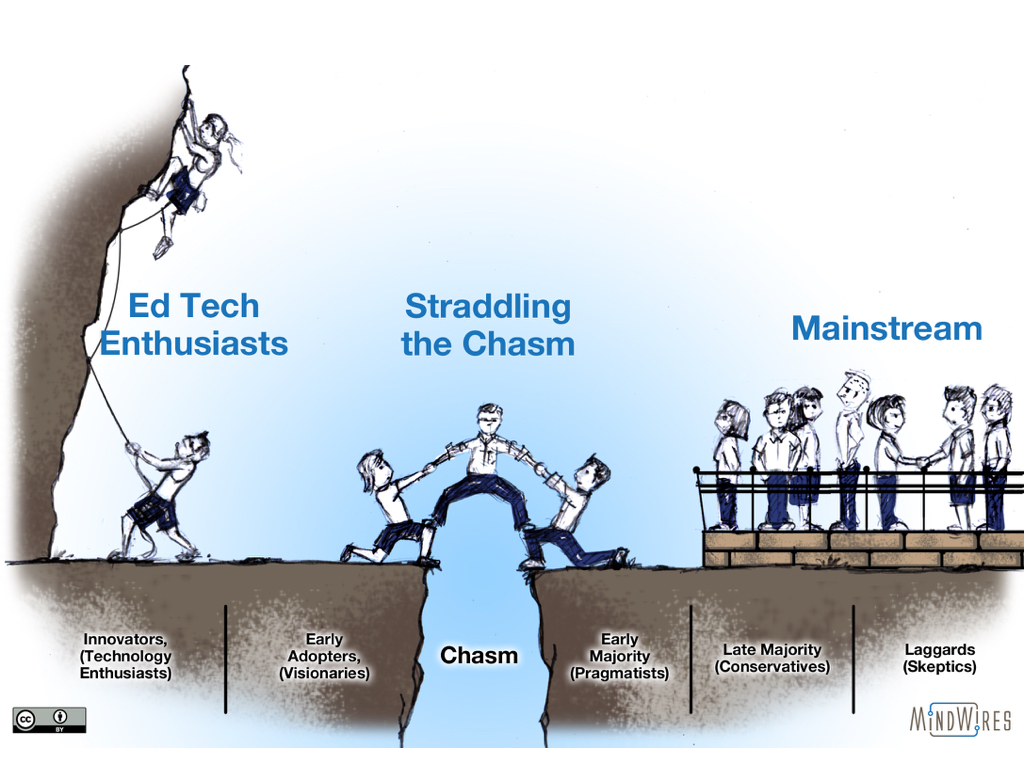

Over the past few years I have increased my usage of the technology adoption curve – originating from Everett Rogers and extended by Geoffrey Moore – to explain some of the tension faced by ed tech support organizations. In a nutshell, a bigger determination of adoption patterns of pedagogical and technology-enabled changes in education is from social change issues rather than the innovation (whether purely pedagogical or tech-based) itself. It’s not technology, it’s people. One graphic in particular that I use is based on the notion that while Moore presented a chasm in the technology adoption curve describing solutions moving across the chasm to reach majority markets, a bigger issue is that we will always have innovators, early adopters, majority and laggard groups in education, with a constant flux of teaching and learning innovations. Therefore the issue is straddling the chasm, helping both sides.

When Michael and I gave a keynote at last month’s OpenEd conference, the always interesting Alan Levine pointed out via Twitter (and referencing a blog post of his) that this perspective reminded him of one he read years ago and rediscovered in 2014. I found the article (and the author, I think), and it is a fascinating read that shows just how strong the pattern of innovation in ed tech is. The 1994 article could be written today with just a few changes to examples used.

Whatever Happened to Instructional Technology?

William Geoghegan was working in academic technology consulting at IBM in 1994, and he wrote the seminal article “Whatever Happened to Instructional Technology?” as a paper presented at the 22nd Annual Conference of the International Business Schools Computing Association. Keep in mind that 1994 was just one year after the first web browser, so the Internet had not yet become the dominant communication medium. As Alan provided in his post, a few excerpts from this paper 21 years ago are “quite revealing and relevant”.

The advent of digital computers on college campuses more than three decades ago brought with it a growing belief that this new technology would soon produce fundamental changes in the practice, if not the very nature, of teaching and learning in American higher education. It would foster a revolution where learning would be paced to a student’s needs and abilities, where faculty would act as mentors rather than “talking heads” at the front of an auditorium, where learning would take place through exploration and discovery, and where universal educational access, transcending barriers of time and space, would become the norm. This vision of a pedagogical utopia has been in circulation for at least three decades, enjoying a sort of perpetual imminence that renews itself with each passing generation of technology.

So the vision of personalized learning and its attendant faculty role change is not quite as new as might be assumed.

Alan’s post provides quite a few excerpts and comments, but I’m going to focus specifically on the Rogers / Moore perspective.

But there’s a problem. Despite massive technology expenditures over the last decade or so, the widespread availability of substantial computing power at increasingly reasonable prices, and a growing “comfort level” with this technology among college and university faculty, information technology is not being integrated into the teaching and learning process nearly as much as people have regularly predicted since it arrived on the educational scene three or four decades ago. There are many isolated pockets of successful technology implementations. But it is an unfortunate fact that these individual successes, as important and as encouraging as they might be, have been slow to propagate beyond their initiators; and they have by no means brought about the technologically inspired revolution in teaching and learning so long anticipated by instructional technology advocates.

That is exactly the problem that I believe is primary. It’s important to understand that the goal is not technology integration, but Geoghegan’s critique is of the pattern of instructional technology not leading to improved instructional practices in large part. His focus is on understanding why that is.

The instructional technology problem, in other words, is not simply a matter of technology being unavailable to faculty. It is not attributable to faculty discomfort with the technology itself, nor to faculty disenchantment with the potential benefits of information technology to instruction. In fact, the best evidence we have available today suggests that desktop computing is being widely used by faculty and, more importantly, that it is being used in support of teaching. The problem is that this support is for the most part logistical in nature: preparation of lecture notes, handouts, overhead transparencies, and other types of printed and display material that substitute for the products of yesterday’s blackboard and typewriter technologies. Such usage may enhance faculty productivity, and it may even help student learning (by substituting neatly printed transparencies for blackboard scribbles, if nothing else); but it does little or nothing to exploit the real value of the technology as an aid to illustration and explanation, as a tool that can assist in analysis and synthesis of information, as an aid to visualization, as a means of access to sources of information that might otherwise be unavailable, and as a vehicle to enable and encourage active, exploratory learning on the part of the student. The technology is being used logistically, in other words, but it is only occasionally being utilized as a medium of delivery, and to even a lesser extent do we find it deeply woven into the actual fabric of instruction.

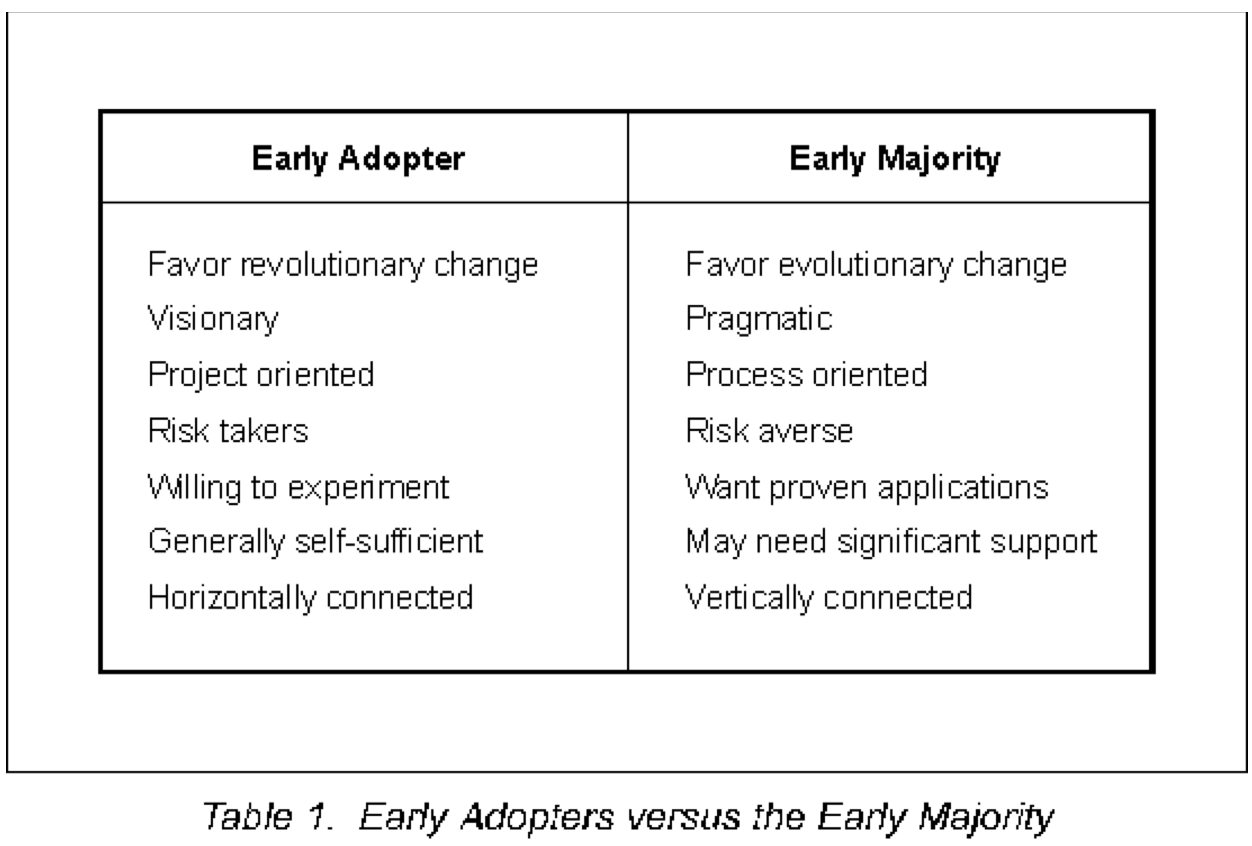

Geoghegan then describes the problems leading this past and current situation, and then uses the Rogers / Moore technology adoption curve and chasm to explain why. The chasm between early adopters and early majority are key, with different needs of each side of the chasm summarized in this table.

This perspective is the basis of the graphic “Straddling The Chasm” I have shared at several conferences this year.

In pointing out why instructional technology has not dramatically impacted the mainstream of faculty, Geoghegan describes four factors:

- Ignorance of the gap;

- An unholy alliance of faculty innovators, campus ed tech support staff, and technology vendors focusing on the left side only;

- Alienation of the mainstream faculty; and

- A lack of a compelling reason to adopt.

That third factor is quite interesting and worth quoting.

Differences between the visionaries and the early majority can produce a situation in which the successes of the early adopters actually work to alienate the mainstream. A good application of technology to instruction, for example – one that promises a radical improvement in some aspect of teaching or learning, and that is produced by technically comfortable and self-sufficient visionaries under risky experimental conditions – can attract considerable attention, and it can set what potential mainstream adopters may perceive as unreasonably high expectations that they may be unable to meet. Moore also points out that the “overall disruptiveness” of early adopter visionaries can alienate and anger the mainstream Moore (1991:59). The early adopters’ high visibility projects can soak up instructional improvement funds, leaving little or nothing for those with more modest technology-based improvements to propose; and their willingness to work in a support vacuum ignores the needs of mainstream faculty who may find themselves left with responsibility for the former’s projects after the developer has moved on to other things. And, finally, the type of discontinuous change favored by the early adopter has a tendency to product disruptive side-effects that magnify the overall cost of adoption.

So much for the idea that ed tech hype and pushback started with MOOCs.

To Be Fair . . .

There have been some real changes in the past 21 years, and I read this paper as describing a common pattern faced in ed tech rather than an indictment of all innovations failing. Online education is now mainstream with about one out of three US higher ed students taking at least one course online, often allowing a new level of access to working adults in particular. Competency-based education has further served this same demographic in a significant and growing manner. The vast majority of faculty use an LMS (for better or worse; the issue is that this innovation has diffused). The Internet has completely changed the access and availability of free and openly-licensed content.

However, the pattern remains, and the human element of diffusing innovations is the primary issue to address to improve learning outcomes.

In the meantime, William Geoghegan retired from IBM in 2007. He did not respond to an interview request, but I do want to thank him for this great and still-timely paper.

[…] Plus Ca Change: About that ed tech adoption curve https://t.co/74CU4MefpI via @flipboard […]