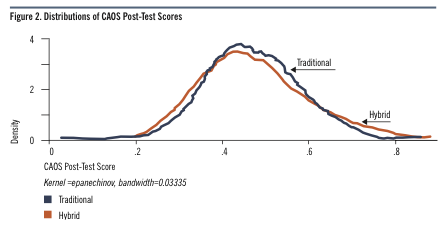

Generally when we talk about goals for educational technology, we talk about one of two things: improving access or improving effectiveness. Rarely do we get an opportunity to talk credibly about an innovation that can move both of those needles at the same time. And yet, I saw just such innovation in Pittsburgh this week at the LearnLab Corporate Partners Meeting. LearnLab, jointly run by Carnegie Mellon University and the University of Pittsburgh, is not widely known in ed tech circles. However, it’s close cousin OLI is. For example, an independent study by ITHAKA S+R made a splash when it showed OLI could cut instructional time in half and still achieve the same level of effectiveness. Two groups of students across six different public universities took the same introductory statistics class. The first group took a traditional version of the class, with an average of 3 hours per week spent in the classroom. The second group took a hybrid version, where they spent one hour a week in the classroom and some time studying independently with the OLI cognitive tutoring software. So that second group spent one-third of the time in the classroom, which amounts to a very significant cost savings and an opportunity to teach more students for less money. Students themselves spent more time on homework in the second group than the first, but even so, they spent 25% less total time on the class than the ones in the traditional class. And yet, both groups achieved essentially the same competence at the end of the class:

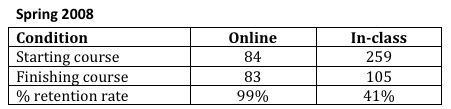

To sum up, OLI was able to teach more students at lower cost and with less time on task from the students yet with the same effectiveness of a traditional class. Another, smaller study with similar traditional vs. cognitive-tutor-hybrid for an introduction to logic course found that while students in the hybrid condition saw a small (though not statistically significant) gap in learning effectiveness relative to their peers, they achieved more than double the completion rate:

OLI is pretty well known in the broader ed tech community. They show up at conferences. The ITHAKA study got lots of play. I find it odd that we have not had a broader conversation about how they have achieved their results. Because the techniques developed by OLI, their colleagues at LearnLab, and their collaborators in other institutions have serious implications for OER, MOOCs, the textbook industry, and really, the future of education.

Cognitive Task Analysis

OLI and LearnLab have a whole bag of useful tricks, but the one I want to focus on is called Cognitive Task Analysis (CTA). CTA is actually a pretty broad family of methods that is used in on-the-job performance support as well as in education, but the heart of it is figuring out the mental steps a person has to go through in order to achieve a goal. For example, suppose a student is trying to solve a math word problem about the length of wire needed to stake a tent pole a certain distance from the tent. First she needs to understand the problem to be solved, which involves reading comprehension. Then she needs to recognize the salient aspects of the problem. For example, “Oh, the tent pole and the ground form a right angle! This is a triangle problem.” Then she needs to recall how to solve for the length of the hypotenuse of a right triangle. And so on.

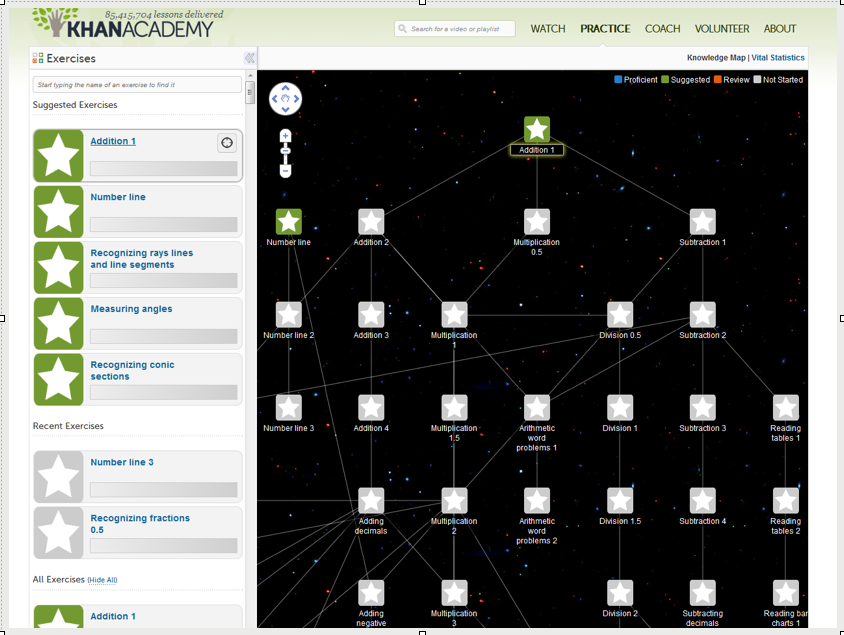

You can use CTA to string together these smaller skills into what amounts to a curriculum for a course. Here’s a skill tree from Khan Academy:

So far, there’s nothing particularly new or revolutionary here. This is breaking a curriculum down into fine-grained learning objectives with a map of prerequisites. That’s time-consuming work, but it’s not rocket science.

Or is it?

The thing is, the results of CTA is supposed to be a map of all the steps in a thought process that a successful problem solver goes through. It is an empirical matter. Further complicating the situation is the fact that expert problem solvers often go through a different process than non-experts. Put another way, students do not yet have the mental shortcuts that their teachers do. That means experts (like teachers) may be blind to knowledge components students need to learn precisely because they are experts.

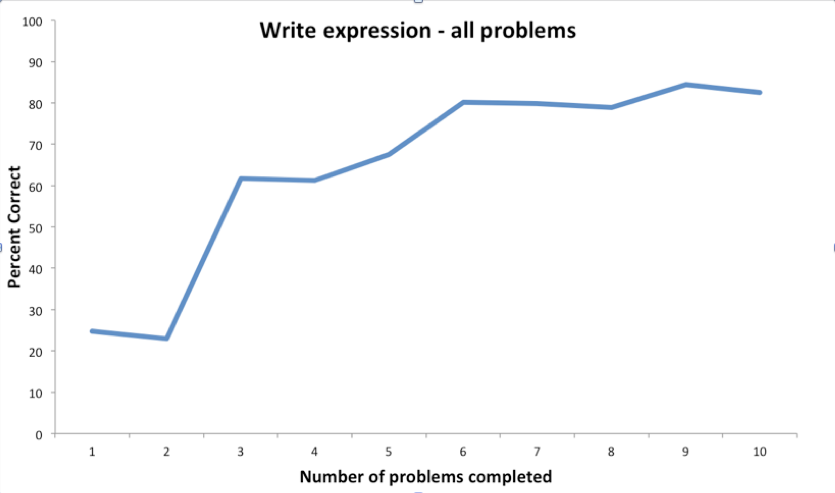

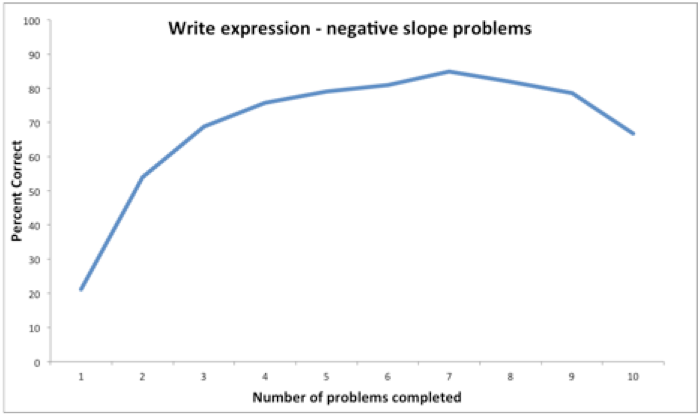

Here’s an example from the LearnLab partners meeting presentation by Steve Ritter of Carnegie Learning.1 The company was puzzled over the results they were seeing in their tutoring software from students learning how to write expressions from word problems. Here’s what they saw:

The thing that troubled them was that weird downward jag between the first and second problems. Why was learning actually getting worse rather than better there? After doing a little digging, they tried separating the positive-slope problems from the negative-slope problems. Here’s what they saw:

The downward jag is gone. It turns out that novice problem-solvers learn how to solve positive-slope problems separately from negative-slope problems. So, when students got a negative-slope second problem after solving a positive-slope first problem, they couldn’t transfer what they had learned. They were effectively starting over. Expert problem solvers learn to see the commonality between these types of problems and don’t separate them out. In fact, it might never even occur to skilled mathematician that a novice might not understand these problem types as being essentially the same. It’s an expert blind spot.

This matters. Let’s say a student gets a solidly average final grade of seventy-seven in her algebra class, but she utterly fails to learn one step that is critical to passing trigonometry. She will fail the next semester. And it is possible that nobody will know why. She may end up concluding that she is just “not good at trigonometry.” To sum up, getting the cognitive steps for a novice correct is an empirical matter with important consequences to learners and is easy to get wrong.

Getting It Right

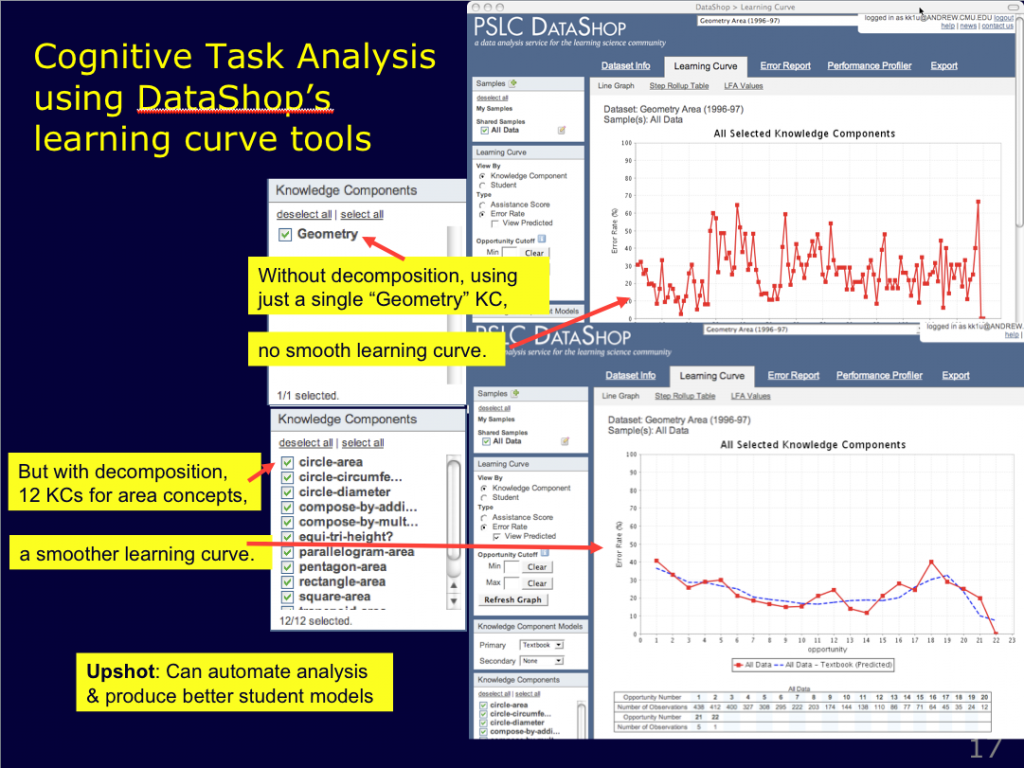

In order to deal with this thorny set of problems, the folks at LearnLab have developed a set of tools. To start with, they constructed an open data repository and analysis toolkit. Those graphs above were (I think) generated by DataShop, which has analyzes student performance data in order to help deduce whether we have the task analysis right or whether we are missing something. That curve of percentage correct by number of problems solved is a learning curve. If we have right knowledge components broken down and presented to the students (assuming it is both presented well and tested effectively), we should see students improving steadily over time. If we don’t see that curve, there’s a good chance that we don’t have the right knowledge components in the right order. DataShop lets us see the difference and also helps us mine the data to find the right order. Here’s an example of before-and-after analysis of a geometry class in DataShop (taken from Ken Koedinger’s overview presentation at the partners meeting):

Let me emphasize that this database is open (though it isn’t open source; this is a hosted service). Anyone can use the tool, and anyone can use the many datasets in the tool that have been made public by the researchers who created them. Keep in mind that Knewton has gotten $50 million in venture funding, partly on the strength of the promise that they would be able to collect data from a bunch of Pearson courses. DataShop has been collecting data for years.

Anyway, the statistical analysis is only part of the puzzle. Before that can be terribly useful, content needs to be tagged with preliminary guesses at those knowledge components so that it can be tested. While the LearningLabs folks are making progress on using machine learning to extract knowledge components in an automated way, as far as I can tell, most of the preliminary work is still done by hand. This can be difficult, in part because instructors aren’t always good at rattling off granular learning steps. But here these researchers have developed a clever trick. It turns out that instructors embed a lot of this knowledge in their construction of test question distractors. If you ask a teacher, “Why did you put that particular wrong answer as a question choice?”, the teacher will often reply, “Because students tend to have misconception X, and this answer will show if the student has that misconception.” Aha! There’s a knowledge component.

The LearnLab folks developed another free project called Cognitive Tutoring Authoring Tools, or CTAT, that enables instructors to map out the knowledge components by building out a quiz. Actually, quiz isn’t quite the right word, because it implies that the activity is graded and summative. This is a tutor. It asks questions like those that would be on a quiz, but it is designed to help students figure out what they need to learn rather than test them on what they have already learned. When a student gets a wrong answer, the tutor gives a hint, and the student can try again. Anyway, here’s a video of CTAT authoring in action:

CTAT tutorials integrates with DataShop. So you can use it to work with a subject-matter expert to elicit the cognitive task analysis, build a tutor to actually deliver learning to students, and analyze the usage data from those students to improve the model. If there is anyone else in the world who has a full-circle educational curriculum design, construction, and analysis toolset—with or without empirical proof that the solution yields dramatic improvements in educational access and effectiveness—I haven’t seen it.

Implications

There are a number of folks who should be really, really excited about the potential here. Schools should be excited because this approach enables them to cut the cost of a student education (by reducing the amount of classroom time). The savings could be used either to reduce tuition costs, increase the number of students that could be educated, or some combination of the two. Teacher and faculty unions should be excited because this is a model the lets them teach students effectively with less labor but without diminishing the value of the teacher.

xMOOC proponents should be excited too. While the xMOOC promoters are being coy about it, there is clearly the aspiration and implication that these courses will eventually replace for-credit traditional education for some. (Maybe you won’t go to MIT, but you’ll go to MIT Community College through edX.) The Gates Foundation is apparently soliciting proposals for MOOCs that can deliver educational effectiveness for remedial courses. And yet I have seen no evidence that any of the xMOOCs have cracked the access vs. effectiveness trade-off.2 LearnLab and OLI have done it. If there is a MOOC developed that meets the Gates Foundation’s goals, I would bet money that it will do so by embedding Pittsburgh-style cognitive tutors.

Textbook publishers should be excited by this work too. The new hotness in the industry is adaptive learning, but it’s really hard to do authentically, effectively, and cost-effectively. LearnLab has demonstrated a way forward. A CTAT-like tool could be used by publishers to elicit from book authors the kind of testable granularity that is necessary to make adaptive learning work. Add a back end like DataShop, and you can fine tune the content model. From there, it’s a relatively small step to adaptive algorithms and the ability to adjust the content the student sees based on achievement.

And finally, the OER world should be excited. A while back, David Wiley posted a warning that the OER movement could be in trouble if it doesn’t respond to the wave of diagnostic and adaptive products coming from the publishers. OLI and LearnLab have a proven model. These tools could be used to crowd-source the development of, essentially, competency metadata spines for major subjects. Furthermore, it could be possible to tag pieces of OER content and make them discoverable through emerging efforts like LRMI and the Learning Registry. Once content is appropriately designed, tagged, and tested at scale, building out a presentation layer for students and teachers that includes analytics dashboards and even adaptive elements is relatively easier.

- Carnegie Learning is a for-profit spin-off from Carnegie Mellon University which uses some of the techniques pioneered at LearnLab. [↩]

- cMOOCs haven’t either, but that may not be a fair criticism. I don’t think that’s the problem they are trying to solve right now. Rather than coming up with a better way to achieve the same goals as a traditional education, I think a lot of the cMOOC proponents might say that they’re trying to come up with a way to achieve better goals—or, at the very least, important goals that are not well served by the traditional educational system. [↩]

“Teacher and faculty unions should be excited because this is a model the lets them teach students effectively with less labor but without diminishing the value of the teacher.”

It seems to me that that the ‘value of the teacher’ is conserved under this scenario only if each teacher teaches more students – hence no less labor. This, for tenure track faculty is probably as it should be. For adjuncts, whose numbers I can envision proportionally increasing under this regime, it just means more of the same.

Am I missing something?

This doesn’t diminish the benefit, if it comes to pass, of teaching more students at the same cost, or the same number of students for less. It just means that Utopia is still a ways off.

How much of the time savings would be used to teach more students and how much would be used to return teaching loads to something less insane than we see at community colleges, for example, is something that would have to be negotiated between people rather than solved by technology. With a two-thirds seat time savings, it seems to me that there is room to do a little of both. The technology makes the negotiation easier by creating a larger pie to work with. But my point was really more general. There is sometimes a fear that the idea is to use technology to replace teachers. These course designs explicitly include teachers, and they include them for high-value one-on-one work. I dislike the expression “flipping the classroom” because it suggests that there exists only the dichotomy of lecture and homework, but the Pittsburgh approach supports the spirit of that idea by giving teachers the time and the tools to focus their energy on the high-value teaching that is tailored to the needs of the specific students in that particular class.